Nvidia's Artificial Intelligence (AI) Chips Still Need Memory. Here's Why the Micron Sell-Off Has Gone Too Far.

Key Points

Over the last year, growth investors boosted Micron stock for its leading role in supplying high-bandwidth memory to AI hyperscalers.

Google TurboQuant is a compression algorithm that reduces the amount of memory AI models require.

While TurboQuant appears as an existential threat to Micron, smart investors understand the bearish narrative is overly dramatic.

- 10 stocks we like better than Micron Technology ›

In late March, Alphabet unveiled a new software product called TurboQuant. At a high level, TurboQuant dramatically compresses memory footprints in large language models during inference.

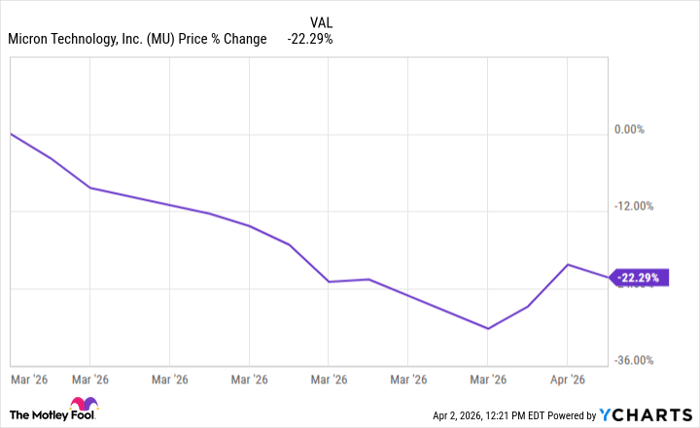

It didn't take long for headlines to circulate and cause shares of Micron Technology (NASDAQ: MU) to plunge. In large part, the sell-off was tied to Micron's relationship with Nvidia (NASDAQ: NVDA) since its high-bandwidth memory (HBM) solutions help power Nvidia's graphics processing units (GPUs).

Will AI create the world's first trillionaire? Our team just released a report on the one little-known company, called an "Indispensable Monopoly" providing the critical technology Nvidia and Intel both need. Continue »

Data by YCharts.

While the perception around Micron's vulnerability was understandable, I think the panic-selling was premature. Nvidia's artificial intelligence (AI) chips still require massive amounts of specialized memory, and TurboQuant does very little to change Micron's position in the equation.

Image source: Micron Technology.

What is causing Micron stock to plummet?

AI models are used to store long conversations and process extended inputs to perform complex tasks. Behind the scenes, enormous volumes of memory and storage sit atop the GPUs actually processing these applications.

At its core, the TurboQuant algorithm minimizes the space required to store memory while also preserving model accuracy. To the casual observer, TurboQuant looks like a software shortcut that allows AI to run on less silicon. Hence, memory stocks across the board cratered on the narrative that future AI workloads will need fewer chips.

If the AI hardware supercycle that once fueled Micron's ascent suddenly crests and recedes, demand from the company's marquee customer, Nvidia, could vanish.

Micron's DRAM chips play a critical role in Nvidia's AI ecosystem

The underlying physics of AI chips tells a different story from the doomsday narrative above. Nvidia's GPUs are not designed as self-contained calculators. Rather, these compute chipsets are tightly integrated with external memory systems.

The chipset itself contains a limited amount of on-chip memory capable of delivering low-latency access. Nvidia needs to complement its GPU core with HBM and stacked dynamic random-access memory (DRAM) layers in order to process and hold the growing number of terabytes required for today's models.

While TurboQuant reduces the amount of working memory (RAM/VRAM) required during operation, it does not make the AI model itself smaller. In turn, the software doesn't completely eliminate the need for rapid, seamless data transfer between different parameters and their underlying compute networks.

What investors are overlooking is that an algorithm like TurboQuant might actually enable larger effective contexts or higher throughput on the same baseload hardware -- subsequently driving more intensive workloads.

Nvidia's latest chip architectures -- Blackwell and Vera Rubin -- were designed with the idea of ever-larger HBM stacks precisely because memory bandwidth is becoming a bottleneck on the heels of surging capacity demand.

In essence, Micron's DRAM is not a simple commodity bolted on to Nvidia's chips. Rather, HBM is the lifeblood that delivers the power of a GPU as advertised.

Reality check: Nvidia isn't going to ditch Micron

As one of the largest suppliers of HBM, Micron has spent years engineering memory solutions that meet the exact power, thermal, and signaling specifications of Nvidia's silicon. Switching suppliers is more than just a procurement decision. Such action requires years of quality assurance testing, yield ramping, and system-level integration. Nvidia simply cannot afford to mortgage its data center empire for an unproven alternative while its roadmap is already supported by a predictable high-volume supplier like Micron.

Moreover, TurboQuant is really just a software optimization layered on top of existing hardware. In other words, the product does not really introduce a direct competitor to incumbent memory technology.

Instead of cannibalizing demand, efficiency gains from TurboQuant are likely to fuel expansion in the HBM market as AI adoption becomes economically viable at scale. These dynamics should actually serve as a tailwind for more GPUs -- each still demands HBM systems alongside.

The recent sell-off in Micron stock is a classic example of headline-driven myopia. In reality, Nvidia's GPUs will continue devouring Micron's DRAM chips because bandwidth -- not just capacity -- is increasingly defining AI performance and optimization.

Should you buy stock in Micron Technology right now?

Before you buy stock in Micron Technology, consider this:

The Motley Fool Stock Advisor analyst team just identified what they believe are the 10 best stocks for investors to buy now… and Micron Technology wasn’t one of them. The 10 stocks that made the cut could produce monster returns in the coming years.

Consider when Netflix made this list on December 17, 2004... if you invested $1,000 at the time of our recommendation, you’d have $533,522!* Or when Nvidia made this list on April 15, 2005... if you invested $1,000 at the time of our recommendation, you’d have $1,089,028!*

Now, it’s worth noting Stock Advisor’s total average return is 930% — a market-crushing outperformance compared to 185% for the S&P 500. Don't miss the latest top 10 list, available with Stock Advisor, and join an investing community built by individual investors for individual investors.

See the 10 stocks »

*Stock Advisor returns as of April 7, 2026.

Adam Spatacco has positions in Alphabet and Nvidia. The Motley Fool has positions in and recommends Alphabet, Micron Technology, and Nvidia. The Motley Fool has a disclosure policy.