Jensen Huang Offers Thanks. Is Samsung the Biggest Winner of Nvidia GTC?

TradingKey - At the NVIDIA GTC 2026 conference, the deep collaboration between Samsung Electronics and NVIDIA ( NVDA) has become the industry's focal point.

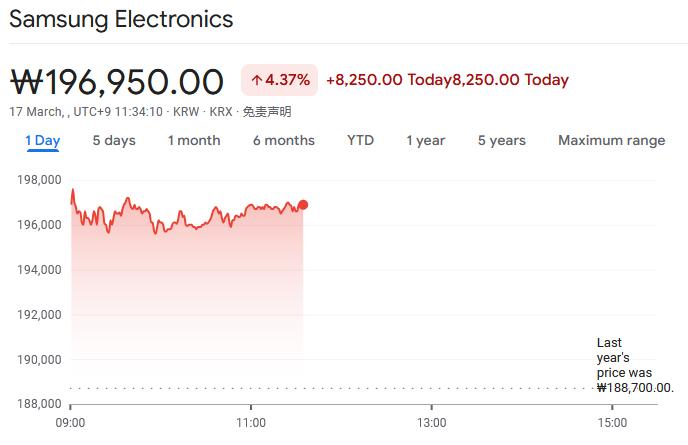

The two parties have not only achieved breakthroughs in the chip foundry sector but are also collaborating across multiple dimensions, including memory technology and system architecture, to jointly drive industrial transformation in the AI inference era. This collaboration also propelled Samsung Electronics' South Korean stock price to rise by over 4% during Tuesday's trading session following the announcement.

NVIDIA Initiates Samsung Foundry for LPX Chips for the First Time

At this conference, NVIDIA officially launched the LPX inference rack based on Groq technology. This product adopts a "disaggregated inference" architecture, dividing the AI inference process into two phases: prefill and token generation, handled by Rubin GPUs and Groq LPU chips, respectively.

Among these, Groq's core LP30 chip is manufactured by Samsung Electronics, marking the first time NVIDIA has awarded server chip orders to a manufacturer other than TSMC ( TSM ).

During his keynote speech, Jensen Huang gave special thanks to Samsung: "Thanks to Samsung for going all out to produce Groq 3 LPU chips; their capacity expansion has exceeded expectations," publicly confirming the deep collaboration between the two companies in the foundry space.

In terms of hardware configuration, a single LPX rack can accommodate 256 LP30 chips, while one Vera Rubin NVL72 system corresponds to one LPX rack (a GPU-to-LPU ratio of 72:256).

According to market demand forecasts, if 100,000 LPX racks are deployed globally, Samsung's foundry business is expected to generate nearly $10 billion in revenue. Compared to the $1,000-$2,000 in foundry services TSMC provides for a single Rubin GPU, the total business value Samsung gains through LPX chip manufacturing and the supply of accompanying HBM and DRAM could be three to four times that of TSMC.

A Leap in HBM4E Performance

On the same day, Samsung Electronics also publicly exhibited the physical chips and core wafers of its next-generation High Bandwidth Memory, HBM4E, for the first time at its GTC booth, showcasing its deep synergy with NVIDIA in the AI storage field.

The head of Samsung's Memory Business Unit stated that the company will leverage its sixth-generation 10nm-class DRAM process accumulated from HBM4 mass production, along with its 4nm base die design capabilities, to accelerate the commercialization of HBM4E. Samples are expected to be provided to global customers in the second half of this year.

At this conference, Samsung was the only memory manufacturer to publicly display next-generation HBM products, a move seen as widening the technical gap with competitors like SK Hynix and Micron in the AI memory race.

In addition to HBM4E, Samsung showcased Hybrid Copper Bonding (HCB) technology. Compared to traditional Thermal Compression Bonding (TCB), HCB technology can reduce thermal resistance by 20% and support chip stacking of 16 layers or more, laying the foundation for performance enhancements in next-generation HBM products.

Samsung emphasized that robust AI systems are key to driving industry innovation and that it will continue to provide high-performance memory solutions for NVIDIA's Vera Rubin platform.

At its booth, Samsung set up a dedicated "NVIDIA Gallery" to highlight the collaborative achievements of both parties in the storage sector, including HBM4, SOCAMM2 server memory modules, and PM1763 SSDs.

Among these, SOCAMM2 is the industry's first mass-produced low-power server memory module, combining high bandwidth with flexible system integration capabilities; the PM1763 SSD utilizes a PCIe 6.0 interface to provide high-speed data transmission and high-capacity storage support for AI applications.

Samsung's All-Scenario AI Computing Strategy

Simultaneously, Samsung demonstrated its comprehensive layout in the AI computing field at the conference, covering scenarios from edge devices to data centers.

For personal device scenarios, Samsung exhibited the PM9E3 and PM9E1 NAND products equipped for NVIDIA DGX Spark, as well as LPDDR5X and LPDDR6 DRAM solutions for high-end smartphones and tablets. Notably, LPDDR6 increases bandwidth to 30-35Gbps per pin and introduces adaptive voltage scaling and dynamic refresh control to support performance for next-generation on-device AI workloads.

In the data center sector, Samsung's PM1753 SSD, as part of the reference architecture for NVIDIA Vera Rubin platform's accelerated storage infrastructure, can enhance the energy efficiency and system performance of inference workloads.

Furthermore, Samsung showcased collaborative results with NVIDIA in AI factory development. The two parties plan to introduce NVIDIA accelerated computing technology to scale Samsung's AI factories and accelerate the implementation of manufacturing digital twins based on the NVIDIA Omniverse libraries.

With the rapid growth of the AI market, the deep collaboration between Samsung and NVIDIA is expected to provide sustained business growth and momentum for technological innovation.